The BlobSync library (at Github and Nuget) has been updated with some small improvements and fixes. What has also been updated is the example executable (called BlobSyncCmd) which can be compiled from the source or downloaded here.

This sample executable is really just a wrapper around the library but is itself a fairly useful tool. Just as a reminder, the theory behind BlobSync can be read in an earlier post. This post covers setting up the executable and some examples of what you can do with it.

Firstly, download the latest release (version 0.2.1 at time of writing) then uncompress it.

There are only 3 configuration values in the BlobSyncCmd.exe.config file:

– AzureAccountKey : This is the usual account key available from the Azure Portal

– AzureAccountName: again, from the portal.

– SignatureSize: This defines the granularity that the file will be uploaded to Azure Blob Storage (ABS). Each of these “blocks” can be independently replaced which is the key to being able to update existing blobs. For example, if you set SignatureSize to 10000 (10k) and you upload a file, modify a single byte in the local copy and upload it again then the approx 10k delta will be uploaded (instead of the entire file). The smaller this value, the smaller the bandwidth requirements although this does limit the overall size of the Azure blobs. This is due to Azure Block Blobs (which BlobSync uses) have a maximum of 50000 blocks. So if you define a block (signature) to be 1k in size then the maximum size of the overall blob is 50000 * 1k == 50M.

BlobSyncCmd Examples:

I have a text file which is about 1.4M in size. I upload using BlobSyncCmd:

Given this is a brand new file all 1410366 bytes had to be uploaded.

Now I edit the file and modify a few lines and need to upload the file again:

Now we can see although we originally had to upload 1410366 bytes for the update we only had to transfer 9959 bytes, so a HUGE savings!

Suppose we have someone else who had the original file but now wants to get our latest updates, they can simply download the latest blob and update their existing OLD copy.

Here we can see their older version of test.txt (called test-version1.txt) is updated against what is available in Azure Blob Storage. Instead of having to download all 1.4M they only had to download 9999 bytes. Again, HUGE bandwidth savings!

During the upload not only is the blob itself being uploaded but another “signature” blob is being generated and associated with the main blob. This signature blob has the information which is used to determine which parts of a file can be reused and which need to be replaced for uploads and downloads. For experimentation purposes it is possible to generate these files and determine how much bandwidth *would* be used if transfer were to happen.

So, repeating what did earlier (but not really transferring any file) suppose we have already uploaded a file, locally modified it and then want to see how many bytes would be transferred for update:

In this case, we have testblob and an updated local test.txt file. According to the estimate if we wanted to upload the new version of text.txt 10039 bytes would be uploaded.

For the scenario where we haven’t uploaded ANYTHING to Azure Blob Storage but we want to see potential savings, we can do the following:

Here we generate a signature (the exact same signature that would have been uploaded to Azure Blob Storage), called c:\temp\test.txt.sig.

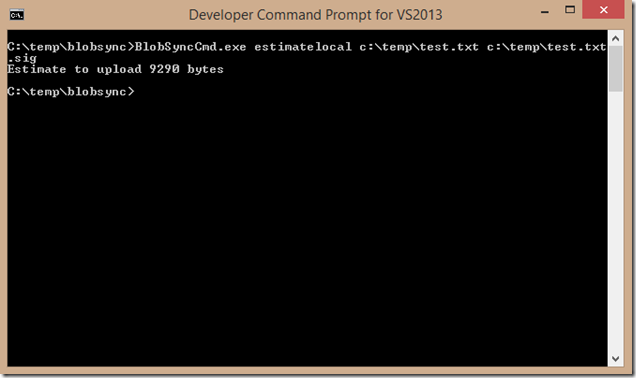

So now we modify test.txt and want to check how much would need to be uploaded to Azure Blob Storage IF we were to upload. So for this we can use the ‘estimatelocal’ command.

This command takes the original signature file (which would have been normally available from Azure Blob Storage) and checks it against the modified test.txt.

In the example above it tells us that we’d just need to upload 9290 bytes to perform the update.

The ‘createsig’ and ‘estimatelocal’ commands are really just for testing various file/file-types to see how well BlobSync would work for those scenarios.